人们已经教会计算机自动找出那些重要的特征和属性, 那么下一步我们该教会计算机什么? — David 9

用深度学习框架跑过实际问题的朋友一定有这样的感觉: 太神奇了, 它竟然能自己学习重要的特征 ! 下一步我们改教会计算机什么?莫非是教会他们寻找新的未知特征?

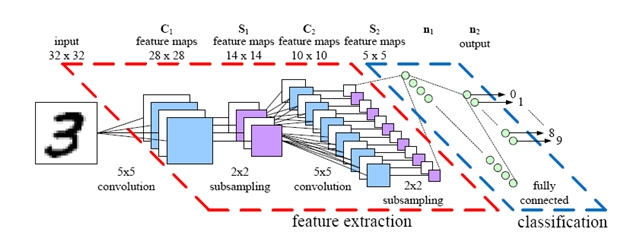

对于卷积神经网络cnn, 其中每个卷积核就是一个cnn习得的特征, 详见David 9之前的关于cnn博客。

今天我们的主角是keras,其简洁性和易用性简直出乎David 9我的预期。大家都知道keras是在TensorFlow上又包装了一层,向简洁易用的深度学习又迈出了坚实的一步。

所以,今天就来带大家写keras中的Hello World , 做一个手写数字识别的cnn。回顾cnn架构:

我们要处理的是这样的灰度像素图:

识别0-9的手写数字,60来行代码就能搞定,简单易懂,全程无尿点。我们先来看单机版跑完的结果:

(python3_env) yanchao727@yanchao727-VirtualBox:~$ python keras_mnist_cnn.py Using TensorFlow backend. x_train shape: (60000, 28, 28, 1) 60000 train samples 10000 test samples Train on 60000 samples, validate on 10000 samples Epoch 1/12 60000/60000 [==============================] - 446s - loss: 0.3450 - acc: 0.8933 - val_loss: 0.0865 - val_acc: 0.9735 Epoch 2/12 60000/60000 [==============================] - 415s - loss: 0.1246 - acc: 0.9627 - val_loss: 0.0565 - val_acc: 0.9819 Epoch 3/12 60000/60000 [==============================] - 389s - loss: 0.0957 - acc: 0.9717 - val_loss: 0.0493 - val_acc: 0.9842 Epoch 4/12 60000/60000 [==============================] - 385s - loss: 0.0805 - acc: 0.9761 - val_loss: 0.0413 - val_acc: 0.9865 Epoch 5/12 60000/60000 [==============================] - 366s - loss: 0.0708 - acc: 0.9788 - val_loss: 0.0361 - val_acc: 0.9874 Epoch 6/12 60000/60000 [==============================] - 368s - loss: 0.0619 - acc: 0.9824 - val_loss: 0.0355 - val_acc: 0.9875 Epoch 7/12 60000/60000 [==============================] - 363s - loss: 0.0585 - acc: 0.9826 - val_loss: 0.0345 - val_acc: 0.9875 Epoch 8/12 60000/60000 [==============================] - 502s - loss: 0.0527 - acc: 0.9837 - val_loss: 0.0339 - val_acc: 0.9883 Epoch 9/12 60000/60000 [==============================] - 444s - loss: 0.0501 - acc: 0.9852 - val_loss: 0.0309 - val_acc: 0.9891 Epoch 10/12 60000/60000 [==============================] - 358s - loss: 0.0472 - acc: 0.9861 - val_loss: 0.0308 - val_acc: 0.9897 Epoch 11/12 60000/60000 [==============================] - 358s - loss: 0.0441 - acc: 0.9872 - val_loss: 0.0330 - val_acc: 0.9893 Epoch 12/12 60000/60000 [==============================] - 381s - loss: 0.0431 - acc: 0.9871 - val_loss: 0.0304 - val_acc: 0.9901 Test loss: 0.0303807387119 Test accuracy: 0.9901

所以我们跑的是keras_mnist_cnn.py。最后达到99%的预测准确率。首先来解释一下输出:

测试样本格式是28*28像素的1通道,灰度图,数量为60000个样本。

测试集是10000个样本。

一次epoch是一次完整迭代(所有样本都训练过),这里我们用了12次迭代,最后一次迭代就可以收敛到99.01%预测准确率了。

loss是训练集损失值. acc是训练集准确率。val_loss是测试集上的损失值,val_acc是测试集上的准确率。

接下来我们看代码:

from __future__ import print_function import keras from keras.datasets import mnist from keras.models import Sequential from keras.layers import Dense, Dropout, Flatten from keras.layers import Conv2D, MaxPooling2D from keras import backend as K

一开始我们导入一些基本库,包括:

- mnist数据源

- Sequential类,可以封装各种神经网络层,包括Dense全连接层,Dropout层,Cov2D 卷积层,等等。

- 我们都知道keras支持两个后端TensorFlow和Theano,可以在

$HOME/.keras/keras.json中配置。

接下来,我们准备训练集和测试集,以及一些重要参数:

# batch_size 太小会导致训练慢,过拟合等问题,太大会导致欠拟合。所以要适当选择

batch_size = 128

# 0-9手写数字一个有10个类别

num_classes = 10

# 12次完整迭代,差不多够了

epochs = 12

# 输入的图片是28*28像素的灰度图

img_rows, img_cols = 28, 28

# 训练集,测试集收集非常方便

(x_train, y_train), (x_test, y_test) = mnist.load_data()

# keras输入数据有两种格式,一种是通道数放在前面,一种是通道数放在后面,

# 其实就是格式差别而已

if K.image_data_format() == 'channels_first':

x_train = x_train.reshape(x_train.shape[0], 1, img_rows, img_cols)

x_test = x_test.reshape(x_test.shape[0], 1, img_rows, img_cols)

input_shape = (1, img_rows, img_cols)

else:

x_train = x_train.reshape(x_train.shape[0], img_rows, img_cols, 1)

x_test = x_test.reshape(x_test.shape[0], img_rows, img_cols, 1)

input_shape = (img_rows, img_cols, 1)

# 把数据变成float32更精确

x_train = x_train.astype('float32')

x_test = x_test.astype('float32')

x_train /= 255

x_test /= 255

print('x_train shape:', x_train.shape)

print(x_train.shape[0], 'train samples')

print(x_test.shape[0], 'test samples')

# 把类别0-9变成2进制,方便训练

y_train = keras.utils.np_utils.to_categorical(y_train, num_classes)

y_test = keras.utils.np_utils.to_categorical(y_test, num_classes)

然后,是令人兴奋而且简洁得令人吃鲸的训练构造cnn和训练过程:

# 牛逼的Sequential类可以让我们灵活地插入不同的神经网络层

model = Sequential()

# 加上一个2D卷积层, 32个输出(也就是卷积通道),激活函数选用relu,

# 卷积核的窗口选用3*3像素窗口

model.add(Conv2D(32,

activation='relu',

input_shape=input_shape,

nb_row=3,

nb_col=3))

# 64个通道的卷积层

model.add(Conv2D(64, activation='relu',

nb_row=3,

nb_col=3))

# 池化层是2*2像素的

model.add(MaxPooling2D(pool_size=(2, 2)))

# 对于池化层的输出,采用0.35概率的Dropout

model.add(Dropout(0.35))

# 展平所有像素,比如[28*28] -> [784]

model.add(Flatten())

# 对所有像素使用全连接层,输出为128,激活函数选用relu

model.add(Dense(128, activation='relu'))

# 对输入采用0.5概率的Dropout

model.add(Dropout(0.5))

# 对刚才Dropout的输出采用softmax激活函数,得到最后结果0-9

model.add(Dense(num_classes, activation='softmax'))

# 模型我们使用交叉熵损失函数,最优化方法选用Adadelta

model.compile(loss=keras.metrics.categorical_crossentropy,

optimizer=keras.optimizers.Adadelta(),

metrics=['accuracy'])

# 令人兴奋的训练过程

model.fit(x_train, y_train, batch_size=batch_size, epochs=epochs,

verbose=1, validation_data=(x_test, y_test))

完整地训练完毕之后,可以计算一下预测准确率:

score = model.evaluate(x_test, y_test, verbose=0)

print('Test loss:', score[0])

print('Test accuracy:', score[1])

整个cnn的MNIST手写数字识别就训练完毕了,是不是非常简单,如果觉得注释还不够详尽,请自己试试源码感受下,或者看看我们文章的底部参考文献。

keras_mnist_cnn.py 完整源码:

'''Trains a simple convnet on the MNIST dataset.

Gets to 99.25% test accuracy after 12 epochs

(there is still a lot of margin for parameter tuning).

16 seconds per epoch on a GRID K520 GPU.

'''

from __future__ import print_function

import keras

from keras.datasets import mnist

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten

from keras.layers import Conv2D, MaxPooling2D

from keras import backend as K

batch_size = 128

num_classes = 10

epochs = 12

# input image dimensions

img_rows, img_cols = 28, 28

# the data, shuffled and split between train and test sets

(x_train, y_train), (x_test, y_test) = mnist.load_data()

if K.image_data_format() == 'channels_first':

x_train = x_train.reshape(x_train.shape[0], 1, img_rows, img_cols)

x_test = x_test.reshape(x_test.shape[0], 1, img_rows, img_cols)

input_shape = (1, img_rows, img_cols)

else:

x_train = x_train.reshape(x_train.shape[0], img_rows, img_cols, 1)

x_test = x_test.reshape(x_test.shape[0], img_rows, img_cols, 1)

input_shape = (img_rows, img_cols, 1)

x_train = x_train.astype('float32')

x_test = x_test.astype('float32')

x_train /= 255

x_test /= 255

print('x_train shape:', x_train.shape)

print(x_train.shape[0], 'train samples')

print(x_test.shape[0], 'test samples')

# convert class vectors to binary class matrices

y_train = keras.utils.np_utils.to_categorical(y_train, num_classes)

y_test = keras.utils.np_utils.to_categorical(y_test, num_classes)

model = Sequential()

model.add(Conv2D(32,

activation='relu',

input_shape=input_shape,

nb_row=3,

nb_col=3))

model.add(Conv2D(64, activation='relu',

nb_row=3,

nb_col=3))

# model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Dropout(0.35))

model.add(Flatten())

model.add(Dense(128, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(num_classes, activation='softmax'))

model.compile(loss=keras.metrics.categorical_crossentropy,

optimizer=keras.optimizers.Adadelta(),

metrics=['accuracy'])

model.fit(x_train, y_train, batch_size=batch_size, epochs=epochs,

verbose=1, validation_data=(x_test, y_test))

score = model.evaluate(x_test, y_test, verbose=0)

print('Test loss:', score[0])

print('Test accuracy:', score[1])

参考文献:

本文章属于“David 9的博客”原创,如需转载,请联系微信: david9ml,或邮箱:yanchao727@gmail.com

或直接扫二维码:

David 9

Latest posts by David 9 (see all)

- 修订特征已经变得切实可行, “特征矫正工程”是否会成为潮流? - 27 3 月, 2024

- 量子计算系列#2 : 量子机器学习与量子深度学习补充资料,QML,QeML,QaML - 29 2 月, 2024

- “现象意识”#2:用白盒的视角研究意识和大脑,会是什么景象?微意识,主体感,超心智,意识中层理论 - 16 2 月, 2024

数据从哪里获取到,https://s3.amazonaws.com/img-datasets/mnist.npz连接不上

我这里可以下载(不用翻墙),如果有问题可以加我微信:david9ml

请问为什么最后对所有像素使用全连接层,输出为128,这个128是怎么来的呢,不应该是10吗?你这里是10个数字,不是10类吗?

10类的输出是在softmax层,仔细看25行输出:

model.add(Dense(num_classes, activation=’softmax’))

請問第7行的as K是甚麼意思?

麻煩了

只是为import的库改名为K,只是一个符号,这是python的常用写法。

您好,打扰了,我想请问一下这个教程里为什么训练结果会出现val_acc始终大于acc呢?不应该是测试集准确率更低吗?还请指教~

没有人说过测试集准确率一定比训练集低的,不知道你是被谁误导的。

如果还有问题可以加我微信: david9ml

如何做预测呢,给定一个图片,或者从测试集取一张

预测就是用训练好的模型再正向传播一下,获得结果